Beware the Sirens of Anthemusa

Artificial Intelligence and the separation of science from faith

When I was about 7 years old my family got a Ouija board. Although mildly curious about the great existential questions for which answers could be sought, I wasn’t strongly attracted to learning from Ouija. I have only one recollection of using it and, eschewing the secrets of the universe, Ouija was asked to provide the name of my brother’s girlfriend. I remember the experience is somewhat akin to when Ralphie got his Annie Oakley secret decoder ring in the movie “A Christmas Story”. Feverishly working through the numbered sequences, he discovered that the secret message was a commercial for Ovaltine.

“A lousy commercial!”

In the case of my brother’s girlfriend, we were let down by its response,

“That would be telling”.

Seriously? There were only my brother and I with fingers on the board and neither of us had the subtlety to come up with such a non-response. So how did it work? Was it black magic as some were saying? Who knew? Who cared?

I had a similar reaction the first time I saw Uri Geller on the Johnny Carson Show. We watched incredulously as, with a great deal of grimacing and accompanying spooky music, the fork on the special stand slowly started to bend in half even as Mr. Geller stood twenty feet away and willed the metal into its misshapen form. How did he do it? What did this demonstration of tremendous mental strength suggest about his power over mere mortals? When his act was over, I remember thinking,

“But what is the use of a bunch of bent forks?”

Another Ralphie moment.

When I was in university, a friend of mine decided that instead of coming to the engineering classes and labs, his time was better spent learning how to focus his mind and make parking spots appear when needed. Once during this time, he stared at me for twenty minutes and then proclaimed,

“I know what you are thinking!”

He laughed when I said,

“Congratulations Einstein. I am thinking you are an idiot.”

Content to flip through his textbooks moments before the exams, he was supremely confident of a successful outcome. Imagine his surprise when the dean invited him to take a year out and try again another day.

Early in my engineering career, I had a friend who became involved in developing the autonomous operation of large mining equipment. It was discovered that the problem of self-driving haulage trucks was infinitely complex. One of the more difficult situations to overcome was the presence of a large rock on the road. Should the truck measure the rock and go over it (if possible)? Should it swerve to one side and hope there was no uphill traffic? Should it just drive over the rock and replace the tires later? As global positioning and remote sensing camera technology improved there were more avenues for resolving the large rock problem, but it never completely went away. What should the truck do if the “rock” was a piece of cardboard? That turned out to be a tough problem to solve. I am told that several mines have since installed autonomous truck haulage and when I asked about the “big rock” problem, I was told that the trucks are remotely monitored by an operator with a joystick override.

These large mining trucks also have 150 data collection sensors that collect specific information each 5 milliseconds and send the data to a computer server. The data from the monitoring system was to be used to predict the mode and time to failure of expensive motor and transmission parts thus saving millions of dollars in repair and maintenance costs. Details to follow. As of a couple of years ago no one had figured out what to do with all the data.

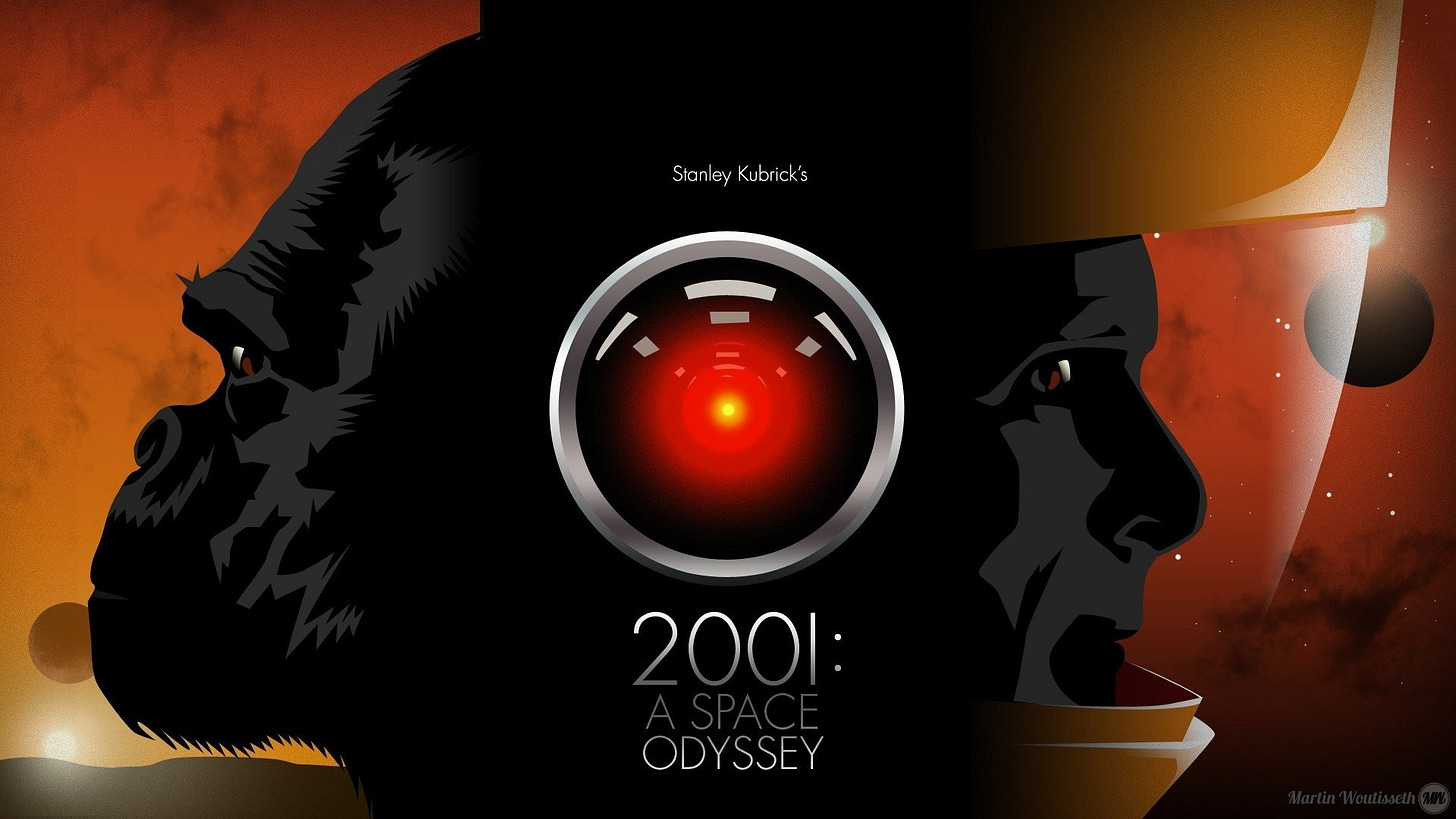

When ChatGPT burst on to the scene a year ago I was an enthusiast. I had read the prognostications of Ray Kurzweil and the trilemma of Nick Bostrom in relation to artificial general intelligence (AGI). I was aware that Elon Musk and others were nervous about the approach of machine-based super intelligence. Fear of a HAL 9000 moment was overwhelmed by the “gee whiz” nature of the technology. For a couple of hours. And then I wondered if it wasn’t all somewhat reminiscent of Uri Geller and his forks. The program wrote grade 10 level essays faster than the time it took to write the question. It could mimic different writing styles and it could draw remarkable pictures. That was a year ago. Then the problems began to crop up.

Legal briefs that referenced non-existent court cases. References to fictitious news reports of salacious behaviour by well-known personalities. Pictures of famous people who were the wrong race. “Hallucinations!” we were told. Perhaps the technology can’t perform according to its hype. Maybe the hype is leading us into a blind alley that is both dangerous and time wasting. There are a couple of problems with large language models as precursors to artificial general intelligence.

In a recent Substack post Erik Larson identified the differences between inductive and deductive reasoning and then explained the relatively new arrival called abductive reasoning.

Abductive reasoning is the feeling you have when you decide that the car speeding into your peripheral vision is going to hit you unless you act. We make guesses about our environment that are informed by sparsely populated databases. We may have seen speeding autos in our peripheral vision only a handful of times, but we decide to jump back onto the sidewalk based on our guess of what might come. That is abductive reasoning and we do it all the time. It is very difficult to program “gut feel” apparently and Elon Musk can’t get his self-driving cars to make similar assessments.

The second problem is that the large language models are very expensive and energy intensive to build. You don’t read the collected literature and science from the beginning of time and build a monstrous matrix of the probability of millions of word combinations without some cost and effort. So far so good. But what do you feed the machine when you have “caught up” to the available literature? That’s right. You feed it what the large language models are producing. The machines are, in effect, eating their own vomit. Unsurprisingly, this is likely to bring the whole exercise to a grinding halt as the models begin to loop on their own errors and reflect the biases of those who are feeding them the reading material.

If AGI requires abductive reasoning and today’s computers are uncapable of abductive reasoning, then will HAL take control of the spaceship of your life? Or will AGI be like global warming and fusion energy which are perennially five years away? Fall in love with your saccharine and understanding chatbox, be wowed by the lovely sunsets of the picture generator and get a chill when the smart people tell you that we are doomed. But after five minutes, respond like Ralphie,

“A lousy commercial for Google?!!”

Some jobs requiring grade 10 writing skills will be lost to large language models. In this sense it is a disruptive technology. But it is also a dead-end technology, and I predict that there will be a break in the news about the brave new world of AGI as things get recalibrated. For the moment, stock prices react to “AI-wash” but that is not likely to last much longer.

In the meantime, my fear is that we don’t recognize that we are in a blind alley, focused as we are on eliminating the creator God and substituting a machine god that will destroy human creativity.

Why be creative when we already know all that needs to be known? Why be creative if the machine will soon do it better? Why be creative when our former belief in a rational universe is replaced by a new belief in a rational machine? Why be creative when all truth is relative, and reality is subjective? Why be creative when everything is reduced to a hierarchy of power and oppression? Why be creative when the guys at the top will just scoop the fruits of our work and give us a medal or a watch? Why be creative when we can own nothing and be happy? Creativity is hard. Why bother?

In the 15th century, Chinese Admiral Zheng He led his fleet on seven inter oceanic voyages that some historians believe reached from the coasts of North America to east Africa. The Spanish conquistadores reported finding Chinese silks in the treasuries of the Aztec Chief Moctezuma II. Such expeditions were stopped shortly after the death of Zheng He by the Haijin edicts or sea bans of the Ming Dynasty. The stifled curiosity and lost creativity led China into the dead end of cultural and commercial isolationism.

Could it be that our search for the machine god is the Haijin edicts of the modern age? If so, we are entering new territory because it is not certain that truncated creativity is easily recovered. If it is true that science is the daughter of rational faith then killing that faith is tantamount to killing science and no amount of large language model-based artificial intelligence, general or not, is going to stop the murder or restart the science.

Creativity, in this model of life, is a function of being made in the image of the creative God. If we separate ourselves from God’s creativity to entertain the siren calls of the machine gods, we will surely founder on the rocks of Anthemusa. Like Ulysses of Homer’s Odyssey, we do well to bind ourselves more tightly to the mast when tempted to separate science from faith.

As long as the money from the other accounts goes into my account then nothing need happen. I am working on an AI bot to make this occur. When your account goes to zero give me a call!

I think your assessment of the novelty and current state are spot on. Given how inter connected computers are I can see a not to distant future when the entire economy collapses because an AI zero’d everyone’s account. Not sure what happened s then. lol.